Facebook AI Research (FAIR) now said it’s open-sourcing wav2letter@anywhere, a deep learning-based inference framework that achieves fast performance for online automatic speech recognition in cloud or embedded edge environments.

Most existing online speech recognition solutions support only recurrent neural networks (RNNs). For wav2letter@anywhere, Facebook uses a fully convolutional acoustic model instead, which results in a 3x throughput improvement on certain inference models and state-of-the-art performance on LibriSpeech.

Wav2letter@anywhere is based on neural net-based language models wav2letter and wav2letter++, which upon its release in December 2018, FAIR called the fastest open-source speech recognition system available.

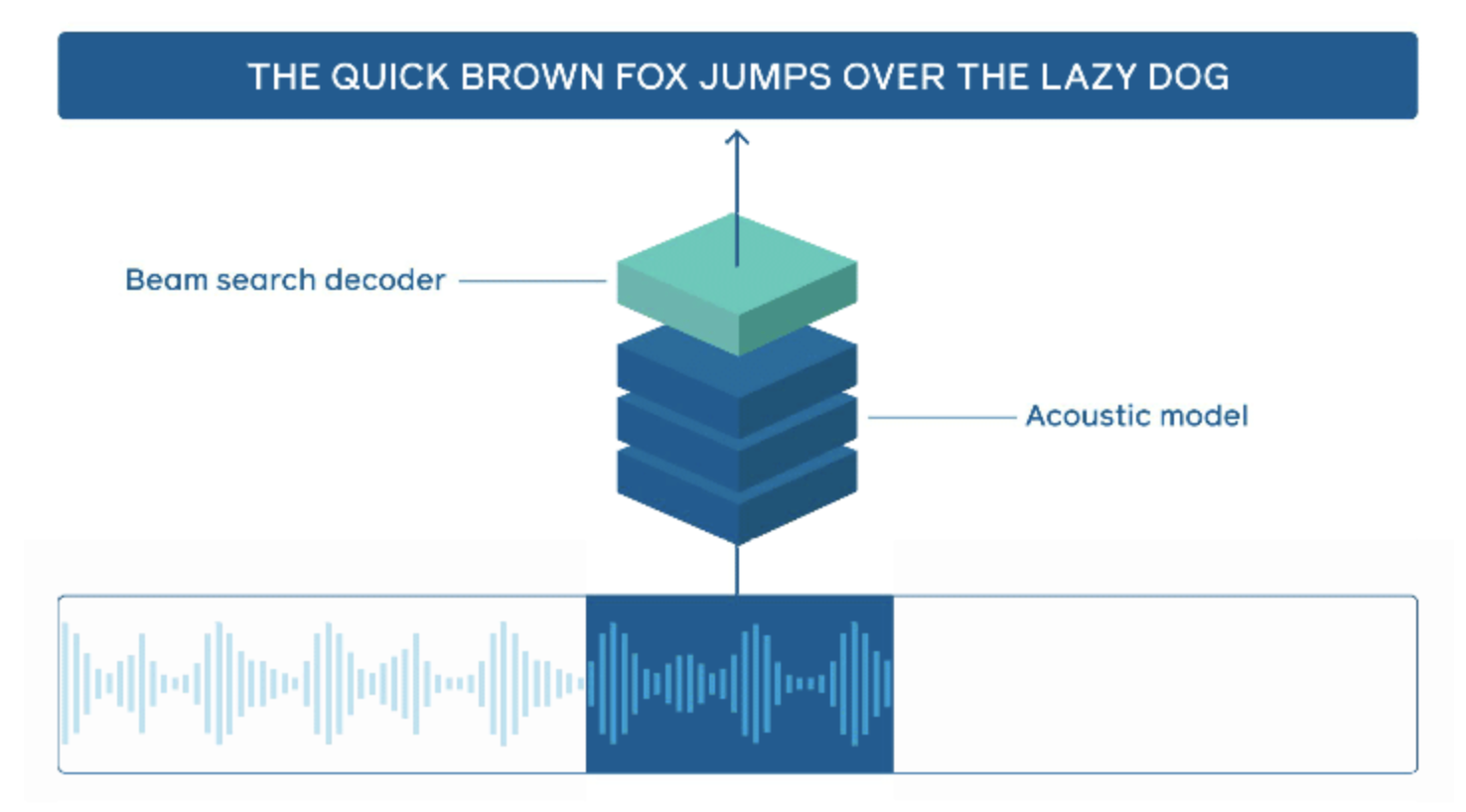

Automatic speech recognition, or ASR, is used to turn audio of spoken words into text, then infer the speaker’s intent in order to carry out a task. An API available on GitHub through the wav2letter++ repository is built to support concurrent audio streams and popular kinds of deep learning speech recognition models like convolutional neural networks (CNN) or recurrent neural networks (RNN) in order to deliver scale necessary for online ASR.

Wav2letter@anywhere achieves better word error rate performance than two baseline models made from bidirectional LSTM RNNs, according to a paper released last week by eight FAIR researchers from labs in New York City and at company headquarters in Menlo Park. Bidirectional LSTM RNNs are a popular way to control latency today.

“The system has almost three times the throughput of a well-tuned hybrid ASR baseline while also having lower latency and a better word error rate,” the group of researchers said. “While latency controlled bi-directional LSTMs are commonly used in online speech recognition, incorporating future context with convolutions can yield more accurate and lower latency models. We find that the TDS convolution can maintain low WERs with a limited amount of future context.”

Low-latency acoustic modeling

An important building block of wav2letter@anywhere is the time-depth separable (TDS) convolution, which yields dramatic reductions in model size and computational flops while maintaining accuracy. We use asymmetric padding for all the convolutions, adding more padding toward the beginning of the input. This reduces the future context seen by the acoustic model, thus reducing the latency.

In comparing our system with two strong baselines (LC BLSTM + lattice-free MMI hybrid system and LC BLSTM + RNN-T end-to-end system on the same benchmark, we were able to achieve better WER performance, throughput, and latency. Most notably, the models are 3x faster even when the inference is run in FP16, while the inference for baselines is run in INT8.

The advances are made possible through improvements to convolutional acoustic models called time-depth separable (TBS) convolutions, an approach laid out by Facebook at Interspeech 2019 last fall that reduces latency and delivers state-of-the-art performance on the LibriSpeech, a collection of 1,000 hours of spoken English data.

CNNs for speech inference is a departure from trends in natural language models that look to recurrent neural networks or Transformer-based models like Google’s Bidirectional Encoder Representations from Transformers (BERT) or for high performance. Separable models may be best known for their applications in computer vision, such as Google’s MobileNet.

The launch of wav2letter@anywhere follows the release of the Pythia framework for image and language models, as well as novel work like wav2vec for online speech recognition and RoBERTa, a model based on Google’s BERT that climbed to the #1 spot on the GLUE benchmark leaderboard this summer but has since fallen to the #8 spot.